http://www.p2pfoundation.net/Transfinancial_Economics

From Wikipedia, the free encyclopedia

This article is about large collections of data. For the band, see Big Data (band).

Big data[1][2] is the term for a collection of data sets so large and complex that it becomes difficult to process using on-hand database management tools or traditional data processing applications. The challenges include capture, curation, storage,[3] search, sharing, transfer, analysis,[4] and visualization. The trend to larger data sets is due to the additional information derivable from analysis of a single large set of related data, as compared to separate smaller sets with the same total amount of data, allowing correlations to be found to "spot business trends, determine quality of research, prevent diseases, link legal citations, combat crime, and determine real-time roadway traffic conditions."[5][6][7]

A visualization created by IBM of Wikipedia edits. At multiple terabytes in size, the text and images of Wikipedia are a classic example of big data.

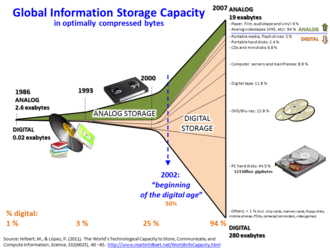

Growth of and Digitization of Global Information Storage Capacity; source: http://www.martinhilbert.net/WorldInfoCapacity.html

Big data is difficult to work with using most relational database management systems and desktop statistics and visualization packages, requiring instead "massively parallel software running on tens, hundreds, or even thousands of servers".[17] What is considered "big data" varies depending on the capabilities of the organization managing the set, and on the capabilities of the applications that are traditionally used to process and analyze the data set in its domain. "For some organizations, facing hundreds of gigabytes of data for the first time may trigger a need to reconsider data management options. For others, it may take tens or hundreds of terabytes before data size becomes a significant consideration."[18]

Contents

[hide]Definition[edit]

Big Data usually includes data sets with sizes beyond the ability of commonly used software tools to capture, curate, manage, and process the data within a tolerable elapsed time.[19] Big data sizes are a constantly moving target, as of 2012[update] ranging from a few dozen terabytes to many petabytes of data in a single data set.In a 2001 research report[20] and related lectures, META Group (now Gartner) analyst Doug Laney defined data growth challenges and opportunities as being three-dimensional, i.e. increasing volume (amount of data), velocity (speed of data in and out), and variety (range of data types and sources). Gartner, and now much of the industry, continue to use this "3Vs" model for describing big data.[21] In 2012, Gartner updated its definition as follows: "Big data is high volume, high velocity, and/or high variety information assets that require new forms of processing to enable enhanced decision making, insight discovery and process optimization."[22] Additionally, a new V "Veracity" is added by some organizations to describe it.[23]

If Gartner’s definition (the 3Vs) is still widely used, the growing maturity of the concept fosters a more sound difference between big data and Business Intelligence, regarding data and their use:

- Business Intelligence uses descriptive statistics with data with high information density to measure things, detect trends etc.;

- Big data uses inductive statistics and concepts from nonlinear system identification [24] to infer laws (regressions, nonlinear relationships, and causal effects) from large data sets [25] to reveal relationships, dependencies, and to perform predictions of outcomes and behaviors.[24][26]

Big science[edit]

The Large Hadron Collider experiments represent about 150 million sensors delivering data 40 million times per second. There are nearly 600 million collisions per second. After filtering and refraining from recording more than 99.999% of these streams, there are 100 collisions of interest per second.[27][28][29]- As a result, only working with less than 0.001% of the sensor stream data, the data flow from all four LHC experiments represents 25 petabytes annual rate before replication (as of 2012). This becomes nearly 200 petabytes after replication.

- If all sensor data were to be recorded in LHC, the data flow would be extremely hard to work with. The data flow would exceed 150 million petabytes annual rate, or nearly 500 exabytes per day, before replication. To put the number in perspective, this is equivalent to 500 quintillion (5×1020) bytes per day, almost 200 times higher than all the other sources combined in the world.

Science and research[edit]

- When the Sloan Digital Sky Survey (SDSS) began collecting astronomical data in 2000, it amassed more in its first few weeks than all data collected in the history of astronomy. Continuing at a rate of about 200 GB per night, SDSS has amassed more than 140 terabytes of information. When the Large Synoptic Survey Telescope, successor to SDSS, comes online in 2016 it is anticipated to acquire that amount of data every five days.[5]

- Decoding the human genome originally took 10 years to process, now it can be achieved in less than a week : the DNA sequencers have divided the sequencing cost by 10,000 in the last ten years, which is 100 times faster than the reduction in cost predicted by Moore's Law.[30]

- Computational social science — Tobias Preis et al. used Google Trends data to demonstrate that Internet users from countries with a higher per capita gross domestic product (GDP) are more likely to search for information about the future than information about the past. The findings suggest there may be a link between online behaviour and real-world economic indicators.[31][32][33] The authors of the study examined Google queries logs made by ratio of the volume of searches for the coming year (‘2011’) to the volume of searches for the previous year (‘2009’), which they call the ‘future orientation index’.[34] They compared the future orientation index to the per capita GDP of each country and found a strong tendency for countries in which Google users enquire more about the future to exhibit a higher GDP. The results hint that there may potentially be a relationship between the economic success of a country and the information-seeking behavior of its citizens captured in big data.

- The NASA Center for Climate Simulation (NCCS) stores 32 petabytes of climate observations and simulations on the Discover supercomputing cluster.[35]

- Tobias Preis and his colleagues Helen Susannah Moat and H. Eugene Stanley introduced a method to identify online precursors for stock market moves, using trading strategies based on search volume data provided by Google Trends.[36] Their analysis of Google search volume for 98 terms of varying financial relevance, published in Scientific Reports,[37] suggests that increases in search volume for financially relevant search terms tend to precede large losses in financial markets.[38][39][40][41][42][43][44][45]

Government[edit]

- In 2012, the Obama administration announced the Big Data Research and Development Initiative, which explored how big data could be used to address important problems faced by the government.[46] The initiative was composed of 84 different big data programs spread across six departments.[47]

- Big data analysis played a large role in Barack Obama's successful 2012 re-election campaign.[48]

- The United States Federal Government owns six of the ten most powerful supercomputers in the world.[49]

- The Utah Data Center is a data center currently being constructed by the United States National Security Agency. When finished, the facility will be able to handle a large amount of information collected by the NSA over the Internet. The exact amount of storage space is unknown, but more recent sources claim it will be on the order of a few Exabytes.[50][51][52]

Private sector[edit]

- eBay.com uses two data warehouses at 7.5 petabytes and 40PB as well as a 40PB Hadoop cluster for search, consumer recommendations, and merchandising. Inside eBay’s 90PB data warehouse

- Amazon.com handles millions of back-end operations every day, as well as queries from more than half a million third-party sellers. The core technology that keeps Amazon running is Linux-based and as of 2005 they had the world’s three largest Linux databases, with capacities of 7.8 TB, 18.5 TB, and 24.7 TB.[53]

- Walmart handles more than 1 million customer transactions every hour, which is imported into databases estimated to contain more than 2.5 petabytes (2560 terabytes) of data – the equivalent of 167 times the information contained in all the books in the US Library of Congress.[5]

- Facebook handles 50 billion photos from its user base.[54]

- FICO Falcon Credit Card Fraud Detection System protects 2.1 billion active accounts world-wide.[55]

- The volume of business data worldwide, across all companies, doubles every 1.2 years, according to estimates.[56]

- Windermere Real Estate uses anonymous GPS signals from nearly 100 million drivers to help new home buyers determine their typical drive times to and from work throughout various times of the day.[57]

International development[edit]

Research on the effective usage of information and communication technologies for development (also known as ICT4D) suggests that big data technology can make important contributions but also present unique challenges to International development.[58][59] Advancements in big data analysis offer cost-effective opportunities to improve decision-making in critical development areas such as health care, employment, economic productivity, crime, security, and natural disaster and resource management.[60][61] However, longstanding challenges for developing regions such as inadequate technological infrastructure and economic and human resource scarcity exacerbate existing concerns with big data such as privacy, imperfect methodology, and interoperability issues.[60]Market[edit]

"Big Data" has increased the demand of information management specialists in that Software AG, Oracle Corporation, IBM, Microsoft, SAP, EMC, HP and Dell have spent more than $15 billion on software firms only specializing in data management and analytics. In 2010, this industry on its own was worth more than $100 billion and was growing at almost 10 percent a year: about twice as fast as the software business as a whole.[5]Developed economies make increasing use of data-intensive technologies. There are 4.6 billion mobile-phone subscriptions worldwide and there are between 1 billion and 2 billion people accessing the internet.[5] Between 1990 and 2005, more than 1 billion people worldwide entered the middle class which means more and more people who gain money will become more literate which in turn leads to information growth. The world's effective capacity to exchange information through telecommunication networks was 281 petabytes in 1986, 471 petabytes in 1993, 2.2 exabytes in 2000, 65 exabytes in 2007[14] and it is predicted that the amount of traffic flowing over the internet will reach 667 exabytes annually by 2013.[5]

Architecture[edit]

In 2004, Google published a paper on a process called MapReduce that used such an architecture. MapReduce framework provides a parallel processing model and associated implementation to process huge amount of data. With MapReduce, queries are split and distributed across parallel nodes and processed in parallel (the Map step). The results are then gathered and delivered (the Reduce step). The framework was incredibly successful,[62] so others wanted to replicate the algorithm. Therefore, an implementation of MapReduce framework was adopted by an Apache open source project named Hadoop.[63]MIKE2.0 is an open approach to information management that acknowledges the need for revisions due to big data implications in an article title Big Data Solution Offering.[64] The methodology addresses handling big data in terms of useful permutations of data sources, complexity in interrelationships, and difficulty in deleting (or modifying) individual records.[65]

Recent studies show the use of multiple layer architecture as an option to Big Data. The Distributed Parallel architecture distributes data across multiple processing units and parallel processing units provide data much faster, by improving processing speeds. This type of architecture inserts data into parallel DBMS, which implements the use of MapReduce and Hadoop frameworks. This type of framework looks to make the processing power transparent to the end user by using a front end application server.[66]

Technologies[edit]

DARPA’s Topological Data Analysis program (showing a Klein bottle) seeks the fundamental structure of massive data sets.

Some but not all MPP relational databases have the ability to store and manage petabytes of data. Implicit is the ability to load, monitor, back up, and optimize the use of the large data tables in the RDBMS.[70]

DARPA’s Topological Data Analysis program seeks the fundamental structure of massive data sets and in 2008 the technology went public with the launch of a company called Ayasdi.[71]

The practitioners of big data analytics processes are generally hostile to slower shared storage,[72] preferring direct-attached storage (DAS) in its various forms from solid state drive (SSD) to high capacity SATA disk buried inside parallel processing nodes. The perception of shared storage architectures—SAN and NAS—is that they are relatively slow, complex, and expensive. These qualities are not consistent with big data analytics systems that thrive on system performance, commodity infrastructure, and low cost.

Real or near-real time information delivery is one of the defining characteristics of big data analytics. Latency is therefore avoided whenever and wherever possible. Data in memory is good—data on spinning disk at the other end of a FC SAN connection is not. The cost of a SAN at the scale needed for analytics applications is very much higher than other storage techniques.

There are advantages as well as disadvantages to shared storage in big data analytics, but big data analytics practitioners as of 2011[update] did not favour it.[73]

Research activities[edit]

In March 2012, The White House announced a national "Big Data Initiative" that consisted of six Federal departments and agencies committing more than $200 million to big data research projects.[74]The initiative included a National Science Foundation "Expeditions in Computing" grant of $10 million over 5 years to the AMPLab[75] at the University of California, Berkeley.[76] The AMPLab also received funds from DARPA, and over a dozen industrial sponsors and uses big data to attack a wide range of problems from predicting traffic congestion[77] to fighting cancer.[78]

The White House Big Data Initiative also included a commitment by the Department of Energy to provide $25 million in funding over 5 years to establish the Scalable Data Management, Analysis and Visualization (SDAV) Institute,[79] led by the Energy Department’s Lawrence Berkeley National Laboratory. The SDAV Institute aims to bring together the expertise of six national laboratories and seven universities to develop new tools to help scientists manage and visualize data on the Department’s supercomputers.

The U.S. state of Massachusetts announced the Massachusetts Big Data Initiative in May 2012, which provides funding from the state government and private companies to a variety of research institutions.[80] The Massachusetts Institute of Technology hosts the Intel Science and Technology Center for Big Data in the MIT Computer Science and Artificial Intelligence Laboratory, combining government, corporate, and institutional funding and research efforts.[81]

The European Commission is funding a 2-year-long Big Data Public Private Forum through their Seventh Framework Program to engage companies, academics and other stakeholders in discussing big data issues. The project aims to define a strategy in terms of research and innovation to guide supporting actions from the European Commission in the successful implementation of the Big Data economy. Outcomes of this project will be used as input for Horizon 2020, their next framework program.[82]

The IBM sponsored 37th annual "Battle of the Brains" student Big Data championship will be held in July 2013.[83] The inaugural professional 2014 Big Data World Championship is to be held in Dallas, Texas.[84]

In order to make manufacturing more competitive in the United States (and globe), there is a need to integrate more American ingenuity and innovation into manufacturing ; Therefore, National Science Foundation has granted the Industry University cooperative research center for Intelligent Maintenance Systems (IMS) at university of Cincinnati to focus on developing advanced predictive tools and techniques to be applicable in Big Data environment.[85][86] In May 2013, IMS Center held an industry advisory board meeting focusing on Big Data where presenters from various industrial companies discussed their concerns, issues and future goals in Big Data environment.

Applications[edit]

Manufacturing[edit]

Based on TCS 2013 Global Trend Study, huge improvements in supply planning and boost product quality is the greatest benefit of Big Data for manufacturing.[87] Big Data provides an infrastructure for transparency in manufacturing industry, which is the ability to unravel uncertainties such as inconsistent component performance and availability. Predictive manufacturing as an applicable approach toward near-zero downtime and transparency requires vast amount of data and advanced prediction tools for a systematic process of data into useful information.[88] A conceptual framework of predictive manufacturing begins with data acquisition where different type of sensory data is available to acquire such as acoustics, vibration, pressure, current, voltage and controller data. Vast amount of sensory data in addition to historical data construct the “Big Data” in manufacturing. The generated Big Data acts as the input into predictive tools and preventive strategies such as Prognostics and Health Management (PHM).[85]Critique[edit]

Critiques of the big data paradigm come in two flavors, those that question the implications of the approach itself, and those that question the way it is currently done.Critiques of the big data paradigm[edit]

"A crucial problem is that we do not know much about the underlying empirical micro-processes that lead to the emergence of the[se] typical network characteristics of Big Data".[19] In their critique, Snijders, Matzat, and Reips point out that often very strong assumptions are made about mathematical properties that may not at all reflect what is really going on at the level of micro-processes. Mark Graham has leveled broad critiques at Chris Anderson's assertion that big data will spell the end of theory: focusing in particular on the notion that big data will always need to be contextualized in their social, economic and political contexts.[89] Even as companies invest eight- and nine-figure sums to derive insight from information streaming in from suppliers and customers, less than 40% of employees have sufficiently mature processes and skills to do so. To overcome this insight deficit, "big data", no matter how comprehensive or well analyzed, needs to be complemented by "big judgment", according to an article in the Harvard Business Review.[90]Much in the same line, it has been pointed out that the decisions based on the analysis of big data are inevitably "informed by the world as it was in the past, or, at best, as it currently is".[60] Fed by a large number of data on past experiences, algorithms can predict future development if the future is similar to the past. If the systems dynamics of the future change, the past can say little about the future. For this, it would be necessary to have a thorough understanding of the systems dynamic, which implies theory.[91] As a response to this critique it has been suggested to combine big data approaches with computer simulations, such as agent-based models, for example.[60] Agent-based models are increasingly getting better in predicting the outcome of social complexities of even unknown future scenarios through computer simulations that are based on a collection of mutually interdependent algorithms.[92][93] In addition, use of multivariate methods that probe for the latent structure of the data, such as factor analysis and cluster analysis, have proven useful as analytic approaches that go well beyond the bi-variate approaches (cross-tabs) typically employed with smaller data sets.

In Health and biology, conventional scientific approaches are based on experimentation. For these approaches, the limiting factor are the relevant data that can confirm or refute the initial hypothesis.[94] A new postulate is accepted now in biosciences : the information provided by the data in huge volumes (omics) without prior hypothesis is complementary and sometimes necessary to conventional approaches based on experimentation. In the massive approaches it is the formulation of a relevant hypothesis to explain the data that is the limiting factor. The search logic is reversed and the limits of induction ("Glory of Science and Philosophy scandal", C. D. Broad, 1926) to be considered.

Privacy advocates are concerned about the threat to privacy represented by increasing storage and integration of personally identifiable information; expert panels have released various policy recommendations to conform practice to expectations of privacy.[95][96][97]

Critiques of big data execution[edit]

Researcher Danah Boyd has raised concerns about the use of big data in science neglecting principles such as choosing a representative sample by being too concerned about actually handling the huge amounts of data.[98] This approach may lead to results bias in one way or another. Integration across heterogeneous data resources — some that might be considered "big data" and others not — presents formidable logistical as well as analytical challenges, but many researchers argue that such integrations are likely to represent the most promising new frontiers in science.[99]See also[edit]

- Data Defined Storage

- Nonlinear system identification

- Operations research

- Supercomputer

- Tuple space

- Unstructured data

- MapReduce

- Programming with Big Data in R.

References[edit]

- Jump up ^ White, Tom (10 May 2012). Hadoop: The Definitive Guide. O'Reilly Media. p. 3. ISBN 978-1-4493-3877-0.

- Jump up ^ "MIKE2.0, Big Data Definition".

- Jump up ^ Kusnetzky, Dan. "What is "Big Data?"". ZDNet.

- Jump up ^ Vance, Ashley (22 April 2010). "Start-Up Goes After Big Data With Hadoop Helper". New York Times Blog.

- ^ Jump up to: a b c d e f "Data, data everywhere". The Economist. 25 February 2010. Retrieved 9 December 2012.

- Jump up ^ "E-Discovery Special Report: The Rising Tide of Nonlinear Review". Hudson Global. Retrieved 1 July 2012. by Cat Casey and Alejandra Perez

- Jump up ^ "What Technology-Assisted Electronic Discovery Teaches Us About The Role Of Humans In Technology — Re-Humanizing Technology-Assisted Review". Forbes. Retrieved 1 July 2012.

- Jump up ^ Francis, Matthew (2012-04-02). "Future telescope array drives development of exabyte processing". Retrieved 2012-10-24.

- Jump up ^ "Community cleverness required". Nature 455 (7209): 1. 4 September 2008. doi:10.1038/455001a.

- Jump up ^ "Sandia sees data management challenges spiral". HPC Projects. 4 August 2009.

- Jump up ^ Reichman, O.J.; Jones, M.B.; Schildhauer, M.P. (2011). "Challenges and Opportunities of Open Data in Ecology". Science 331 (6018): 703–5. doi:10.1126/science.1197962.

- Jump up ^ Hellerstein, Joe (9 November 2008). "Parallel Programming in the Age of Big Data". Gigaom Blog.

- Jump up ^ Segaran, Toby; Hammerbacher, Jeff (2009). Beautiful Data: The Stories Behind Elegant Data Solutions. O'Reilly Media. p. 257. ISBN 978-0-596-15711-1.

- ^ Jump up to: a b Hilbert & López 2011

- Jump up ^ "IBM What is big data? — Bringing big data to the enterprise". www.ibm.com. Retrieved 2013-08-26.

- Jump up ^ Oracle and FSN, "Mastering Big Data: CFO Strategies to Transform Insight into Opportunity", December 2012

- Jump up ^ Jacobs, A. (6 July 2009). "The Pathologies of Big Data". ACMQueue.

- Jump up ^ Magoulas, Roger; Lorica, Ben (February 2009). "Introduction to Big Data". Release 2.0 (Sebastopol CA: O’Reilly Media) (11).

- ^ Jump up to: a b Snijders, C., Matzat, U., & Reips, U.-D. (2012). ‘Big Data’: Big gaps of knowledge in the field of Internet. International Journal of Internet Science, 7, 1-5. http://www.ijis.net/ijis7_1/ijis7_1_editorial.html

- Jump up ^ Laney, Douglas. "3D Data Management: Controlling Data Volume, Velocity and Variety". Gartner. Retrieved 6 February 2001.

- Jump up ^ Beyer, Mark. "Gartner Says Solving 'Big Data' Challenge Involves More Than Just Managing Volumes of Data". Gartner. Archived from the original on 10 July 2011. Retrieved 13 July 2011.

- Jump up ^ Laney, Douglas. "The Importance of 'Big Data': A Definition". Gartner. Retrieved 21 June 2012.

- Jump up ^ "What is Big Data?". Villanova University.

- ^ Jump up to: a b Billings S.A. "Nonlinear System Identification: NARMAX Methods in the Time, Frequency, and Spatio-Temporal Domains". Wiley, 2013

- Jump up ^ Delort P., Big data Paris 2013 http://www.andsi.fr/tag/dsi-big-data/

- Jump up ^ Delort P., Big Data car Low-Density Data ? La faible densité en information comme facteur discriminant http://lecercle.lesechos.fr/entrepreneur/tendances-innovation/221169222/big-data-low-density-data-faible-densite-information-com

- Jump up ^ "LHC Brochure, English version. A presentation of the largest and the most powerful particle accelerator in the world, the Large Hadron Collider (LHC), which started up in 2008. Its role, characteristics, technologies, etc. are explained for the general public.". CERN-Brochure-2010-006-Eng. LHC Brochure, English version. CERN. Retrieved 20 January 2013.

- Jump up ^ "LHC Guide, English version. A collection of facts and figures about the Large Hadron Collider (LHC) in the form of questions and answers.". CERN-Brochure-2008-001-Eng. LHC Guide, English version. CERN. Retrieved 20 January 2013.

- Jump up ^ Brumfiel, Geoff (19 January 2011). "High-energy physics: Down the petabyte highway". Nature 469. pp. 282–83. doi:10.1038/469282a.

- Jump up ^ Delort P., OECD ICCP Technology Foresight Forum, 2012. http://www.oecd.org/sti/ieconomy/Session_3_Delort.pdf#page=6

- Jump up ^ Preis, Tobias; Moat,, Helen Susannah; Stanley, H. Eugene; Bishop, Steven R. (2012). "Quantifying the Advantage of Looking Forward". Scientific Reports 2: 350. doi:10.1038/srep00350. PMC 3320057. PMID 22482034.

- Jump up ^ Marks, Paul (April 5, 2012). "Online searches for future linked to economic success". New Scientist. Retrieved April 9, 2012.

- Jump up ^ Johnston, Casey (April 6, 2012). "Google Trends reveals clues about the mentality of richer nations". Ars Technica. Retrieved April 9, 2012.

- Jump up ^ Tobias Preis (2012-05-24). "Supplementary Information: The Future Orientation Index is available for download". Retrieved 2012-05-24.

- Jump up ^ Webster, Phil. "Supercomputing the Climate: NASA's Big Data Mission". CSC World. Computer Sciences Corporation. Retrieved 2013-01-18.

- Jump up ^ Philip Ball (April 26, 2013). "Counting Google searches predicts market movements". Nature. Retrieved August 9, 2013.

- Jump up ^ Tobias Preis, Helen Susannah Moat and H. Eugene Stanley (2013). "Quantifying Trading Behavior in Financial Markets Using Google Trends". Scientific Reports 3: 1684. doi:10.1038/srep01684.

- Jump up ^ Nick Bilton (April 26, 2013). "Google Search Terms Can Predict Stock Market, Study Finds". New York Times. Retrieved August 9, 2013.

- Jump up ^ Christopher Matthews (April 26, 2013). "Trouble With Your Investment Portfolio? Google It!". TIME Magazine. Retrieved August 9, 2013.

- Jump up ^ Philip Ball (April 26, 2013). "Counting Google searches predicts market movements". Nature. Retrieved August 9, 2013.

- Jump up ^ Bernhard Warner (April 25, 2013). "'Big Data' Researchers Turn to Google to Beat the Markets". Bloomberg Businessweek. Retrieved August 9, 2013.

- Jump up ^ Hamish McRae (April 28, 2013). "Hamish McRae: Need a valuable handle on investor sentiment? Google it". The Independent. Retrieved August 9, 2013.

- Jump up ^ Richard Waters (April 25, 2013). "Google search proves to be new word in stock market prediction". Financial Times. Retrieved August 9, 2013.

- Jump up ^ David Leinweber (April 26, 2013). "Big Data Gets Bigger: Now Google Trends Can Predict The Market". Forbes. Retrieved August 9, 2013.

- Jump up ^ Jason Palmer (April 25, 2013). "Google searches predict market moves". BBC. Retrieved August 9, 2013.

- Jump up ^ Kalil, Tom. "Big Data is a Big Deal". White House. Retrieved 26 September 2012.

- Jump up ^ Executive Office of the President (March 2012). "Big Data Across the Federal Government". White House. Retrieved 26 September 2012.

- Jump up ^ "How big data analysis helped President Obama defeat Romney in 2012 Elections". Bosmol Social Media News. 8 February 2013. Retrieved 9 March 2013.

- Jump up ^ Hoover, J. Nicholas. "Government's 10 Most Powerful Supercomputers". Information Week. UBM. Retrieved 26 September 2012.

- Jump up ^ Bamford, James. "The NSA Is Building the Country’s Biggest Spy Center (Watch What You Say)". Wired Magazine. Retrieved 2013-03-18.

- Jump up ^ "Groundbreaking Ceremony Held for $1.2 Billion Utah Data Center". National Security Agency Central Security Service. Retrieved 2013-03-18.

- Jump up ^ Hill, Kashmir. "TBlueprints Of NSA's Ridiculously Expensive Data Center In Utah Suggest It Holds Less Info Than Thought". Forbes. Retrieved 2013-10-31.

- Jump up ^ Layton, Julia. "Amazon Technology". Money.howstuffworks.com. Retrieved 2013-03-05.

- Jump up ^ "Scaling Facebook to 500 Million Users and Beyond". Facebook.com. Retrieved 2013-07-21.

- Jump up ^ "FICO® Falcon® Fraud Manager". Fico.com. Retrieved 2013-07-21.

- Jump up ^ "eBay Study: How to Build Trust and Improve the Shopping Experience". Knowwpcarey.com. 2012-05-08. Retrieved 2013-03-05.

- Jump up ^ Wingfield, Nick (2013-03-12). "Predicting Commutes More Accurately for Would-Be Home Buyers - NYTimes.com". Bits.blogs.nytimes.com. Retrieved 2013-07-21.

- Jump up ^ UN GLobal Pulse (2012). Big Data for Development: Opportunities and Challenges (White p. by Letouzé, E.). New York: United Nations. Retrieved from http://www.unglobalpulse.org/projects/BigDataforDevelopment

- Jump up ^ WEF (World Economic Forum), & Vital Wave Consulting. (2012). Big Data, Big Impact: New Possibilities for International Development. World Economic Forum. Retrieved August 24, 2012, from http://www.weforum.org/reports/big-data-big-impact-new-possibilities-international-development

- ^ Jump up to: a b c d "Big Data for Development: From Information- to Knowledge Societies", Martin Hilbert (2013), SSRN Scholarly Paper No. ID 2205145). Rochester, NY: Social Science Research Network; http://papers.ssrn.com/abstract=2205145

- Jump up ^ "Elena Kvochko, Four Ways To talk About Big Data (Information Communication Technologies for Development Series)". worldbank.org. Retrieved 2012-05-30.

- Jump up ^ Bertolucci, Jeff "Hadoop: From Experiment To Leading Big Data Platform", "Information Week", 2013. Retrieved on 14 November 2013.

- Jump up ^ Webster, John. "MapReduce: Simplified Data Processing on Large Clusters", "Search Storage", 2004. Retrieved on 25 March 2013.

- Jump up ^ "Big Data Solution Offering". MIKE2.0. Retrieved 8 Dec 2013.

- Jump up ^ "Big Data Definition". MIKE2.0. Retrieved 9 March 2013.

- Jump up ^ Boja, C; Pocovnicu, A; Bătăgan, L. (2012). "Distributed Parallel Architecture for Big Data". Informatica Economica 16 (2): 116–127.

- Jump up ^ Manyika, James; Chui, Michael; Bughin, Jaques; Brown, Brad; Dobbs, Richard; Roxburgh, Charles; Byers, Angela Hung (May 2011). Big Data: The next frontier for innovation, competition, and productivity. McKinsey Global Institute.

- Jump up ^ "Future Directions in Tensor-Based Computation and Modeling". May 2009.

- Jump up ^ Lu, Haiping; Plataniotis, K.N.; Venetsanopoulos, A.N. (2011). "A Survey of Multilinear Subspace Learning for Tensor Data". Pattern Recognition 44 (7): 1540–1551. doi:10.1016/j.patcog.2011.01.004.

- Jump up ^ Monash, Curt (30 April 2009). "eBay’s two enormous data warehouses".

Monash, Curt (6 October 2010). "eBay followup — Greenplum out, Teradata > 10 petabytes, Hadoop has some value, and more". - Jump up ^ "Resources on how Topological Data Analysis is used to analyze big data". Ayasdi.

- Jump up ^ CNET News (April 1, 2011). "Storage area networks need not apply".

- Jump up ^ "How New Analytic Systems will Impact Storage". September 2011.

- Jump up ^ "Obama Administration Unveils "Big Data" Initiative:Announces $200 Million In New R&D Investments". The White House.

- Jump up ^ "AMPLab at the University of California, Berkeley". Amplab.cs.berkeley.edu. Retrieved 2013-03-05.

- Jump up ^ "NSF Leads Federal Efforts In Big Data". National Science Foundation (NSF). 29 March 2012.

- Jump up ^ Timothy Hunter; Teodor Moldovan; Matei Zaharia; Justin Ma; Michael Franklin; Pieter Abbeel; Alexandre Bayen (October 2011). "Scaling the Mobile Millennium System in the Cloud".

- Jump up ^ David Patterson (5 December 2011). "Computer Scientists May Have What It Takes to Help Cure Cancer". The New York Times.

- Jump up ^ "Secretary Chu Announces New Institute to Help Scientists Improve Massive Data Set Research on DOE Supercomputers". "energy.gov".

- Jump up ^ "Governor Patrick announces new initiative to strengthen Massachusetts’ position as a World leader in Big Data". Commonwealth of Massachusetts.

- Jump up ^ "Big Data @ CSAIL". Bigdata.csail.mit.edu. 2013-02-22. Retrieved 2013-03-05.

- Jump up ^ "Big Data Public Private Forum". Cordis.europa.eu. 2012-09-01. Retrieved 2013-03-05.

- Jump up ^ "About | Battle of the Brains". Battleofthebrains.podbean.com. Retrieved 2013-07-21.

- Jump up ^ "Big Data World Championships". Texata. Retrieved 2013-07-21.

- ^ Jump up to: a b "Center for Intelligent Maintenance Systems (IMS Center)".

- Jump up ^ Lee, Jay; Lapira, Edzel; Bagheri, Behrad; Kao, Hung-An (2013). "Recent Advances and Trends in Predictive Manufacturing Systems in Big Data Environment". Manufacturing Letters 1 (1).

- Jump up ^ http://sites.tcs.com/big-data-study/manufacturing-big-data-benefits-challenges/#. Missing or empty

|title=(help) - Jump up ^ Lee, Jay; Wu, F.; Zhao, W.; Ghaffari, M.; Liao, L (Jan 2013). "Prognostics and health management design for rotary machinery systems—Reviews, methodology and applications". Mechanical Systems and Signal Processing 42 (1).

- Jump up ^ Graham M. (2012). "Big data and the end of theory?". The Guardian.

- Jump up ^ "Good Data Won't Guarantee Good Decisions. Harvard Business Review". Shah, Shvetank; Horne, Andrew; Capellá, Jaime;. HBR.org. Retrieved 8 September 2012.

- Jump up ^ Anderson, C. (2008, June 23). The End of Theory: The Data Deluge Makes the Scientific Method Obsolete. Wired Magazine, (Science: Discoveries). http://www.wired.com/science/discoveries/magazine/16-07/pb_theory

- Jump up ^ Rauch, J. (2002). Seeing Around Corners. The Atlantic, (April), 35–48. http://www.theatlantic.com/magazine/archive/2002/04/seeing-around-corners/302471/

- Jump up ^ Epstein, J. M., & Axtell, R. L. (1996). Growing Artificial Societies: Social Science from the Bottom Up. A Bradford Book.

- Jump up ^ Delort P., Big data in Biosciences, Big Data Paris, 2012 http://www.bigdataparis.com/documents/Pierre-Delort-INSERM.pdf#page=5

- Jump up ^ Ohm, Paul. "Don't Build a Database of Ruin". Harvard Business Review.

- Jump up ^ Darwin Bond-Graham, Iron Cagebook - The Logical End of Facebook's Patents, Counterpunch.org, 2013.12.03

- Jump up ^ Darwin Bond-Graham, Inside the Tech industry’s Startup Conference, Counterpunch.org, 2013.09.11

- Jump up ^ danah boyd (2010-04-29). "Privacy and Publicity in the Context of Big Data". WWW 2010 conference. Retrieved 2011-04-18.

- Jump up ^ Jones, MB; Schildhauer, MP; Reichman, OJ; Bowers, S (2006). "The New Bioinformatics: Integrating Ecological Data from the Gene to the Biosphere" (PDF). Annual Review of Ecology, Evolution, and Systematics 37 (1): 519–544. doi:10.1146/annurev.ecolsys.37.091305.110031.

External links[edit]

| Wikimedia Commons has media related to Big data. |

| Look up big data in Wiktionary, the free dictionary. |

- Big data Analytics and Predictive Analytics

- Multidisciplinary Approaches to Big Social Data Analysis (WWW13 workshop)

- What is Big Data? The definition

- Let's Talk Big Data Value Chain!

- Big Data: No Replacement for the Financial Data Warehouse (WallStreet Technology)

- How do players approach on big data?

- High Performance, Cloud and Symbolic Computing in Big-Data Problems applied to Mathematical Modeling of Comparative Genomics

Further reading[edit]

- "Big Data for Good". ODBMS.org. June 5, 2012. Retrieved 2013-11-12.

- Hilbert, Martin; López, Priscila (2011). "The World's Technological Capacity to Store, Communicate, and Compute Information". Science 332 (6025): 60–65. doi:10.1126/science.1200970. PMID 21310967.

- "The Rise of Industrial Big Data". GE Intelligent Platforms. Retrieved 2013-11-12.

| ||

| ||

| ||

This comment has been removed by the author.

ReplyDeleteThe post is totally awesome! Bunches of incredible information and motivation the two of which we as a whole need! Likewise prefer to appreciate the time and effort you put into your blog.

ReplyDeleteDigital Marketing Training in Chennai

Digital Marketing Course in Chennai